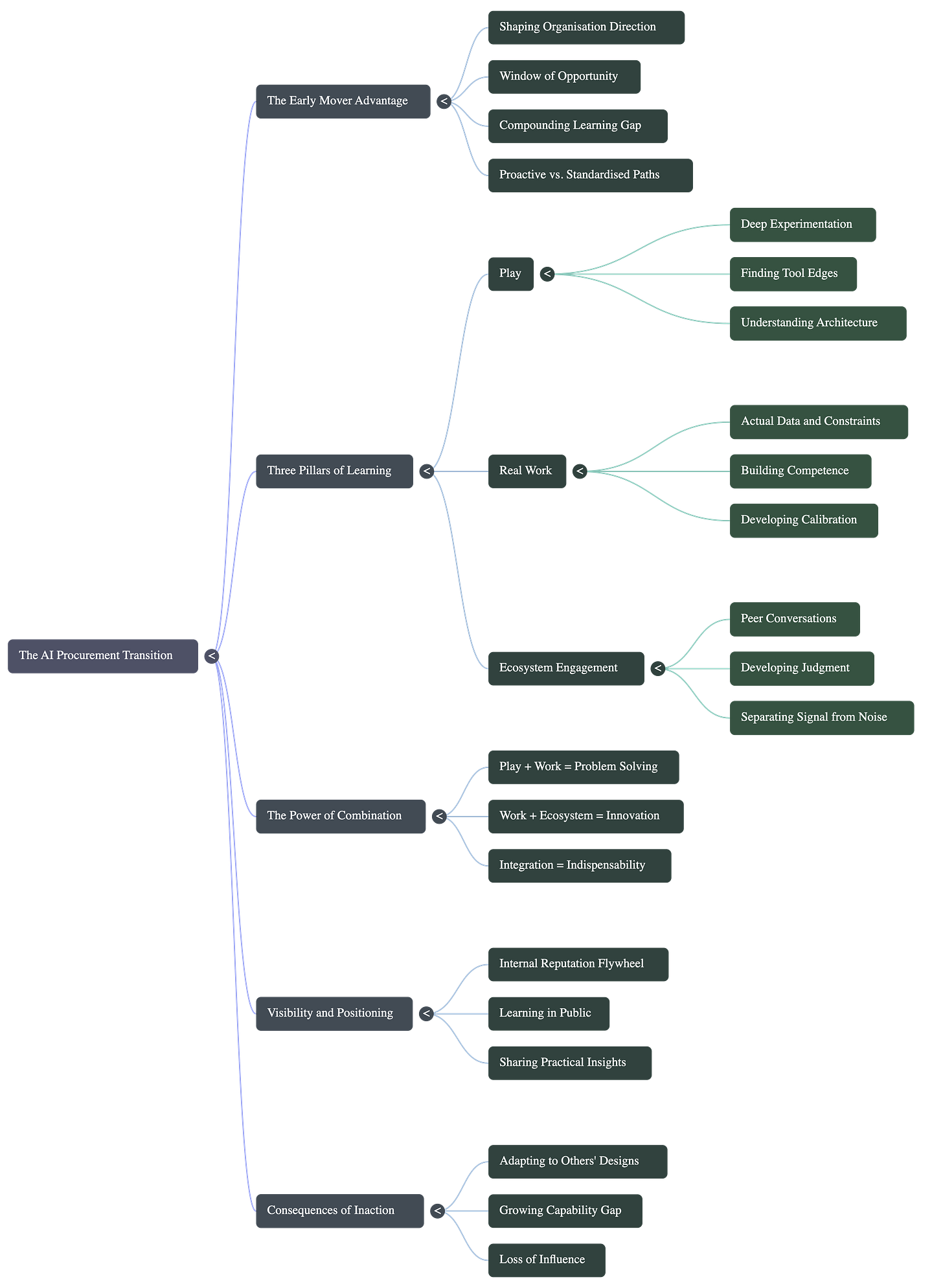

What It Actually Takes

Part 3 of the Procurement Careers in the AI Era series

The people who thrive through the AI transition won’t be the ones who waited for HR to announce a training program. They won’t be the ones who asked their manager “what should I learn?”

They’ll be the ones who positioned themselves before the org chart changed.

But here’s what most people writing about this won’t tell you: it’s not easy. It’s not a weekend project. And it’s definitely not just a matter of playing around with a few tools and reading the right newsletters.

The Window Is Open — But Not Forever

There’s a window in every major technology transition where early movers get to shape their organisation’s direction. That window is open now for AI in procurement.

The dynamics are clear. Leadership knows AI matters but isn’t sure what to do. IT is experimenting but lacks procurement domain expertise. Procurement teams are curious but waiting for guidance.

This creates opportunity.

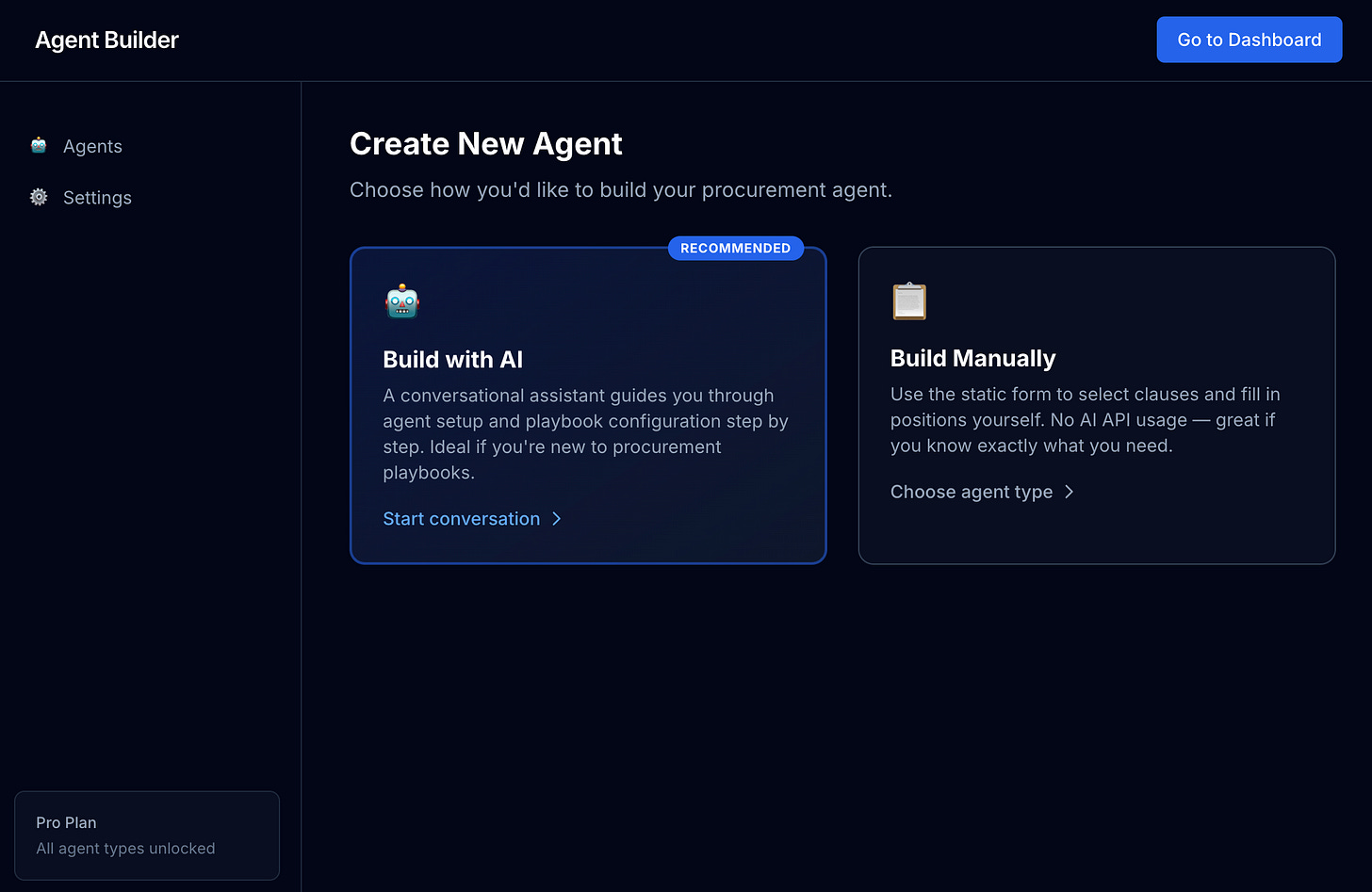

The person who steps forward with a specific proposal—”Let me pilot this tool on this workflow”—gets to shape how AI integrates into the function. The person who waits for the formal training programme gets the standardised version someone else designed.

Both paths lead somewhere. Only one puts you in the driver’s seat.

The window doesn’t stay open forever. It’s not closing tomorrow, but the early mover advantage shrinks over time. The people who started building twelve months ago have twelve months on you. The people who start now will have twelve months on the people who start next year.

What “Learning AI” Actually Requires

This is where most of the advice falls apart.

The popular version of “learning AI” involves following a few newsletters, prompting ChatGPT occasionally, maybe watching a YouTube video about agents. It’s very accessible and produces very little.

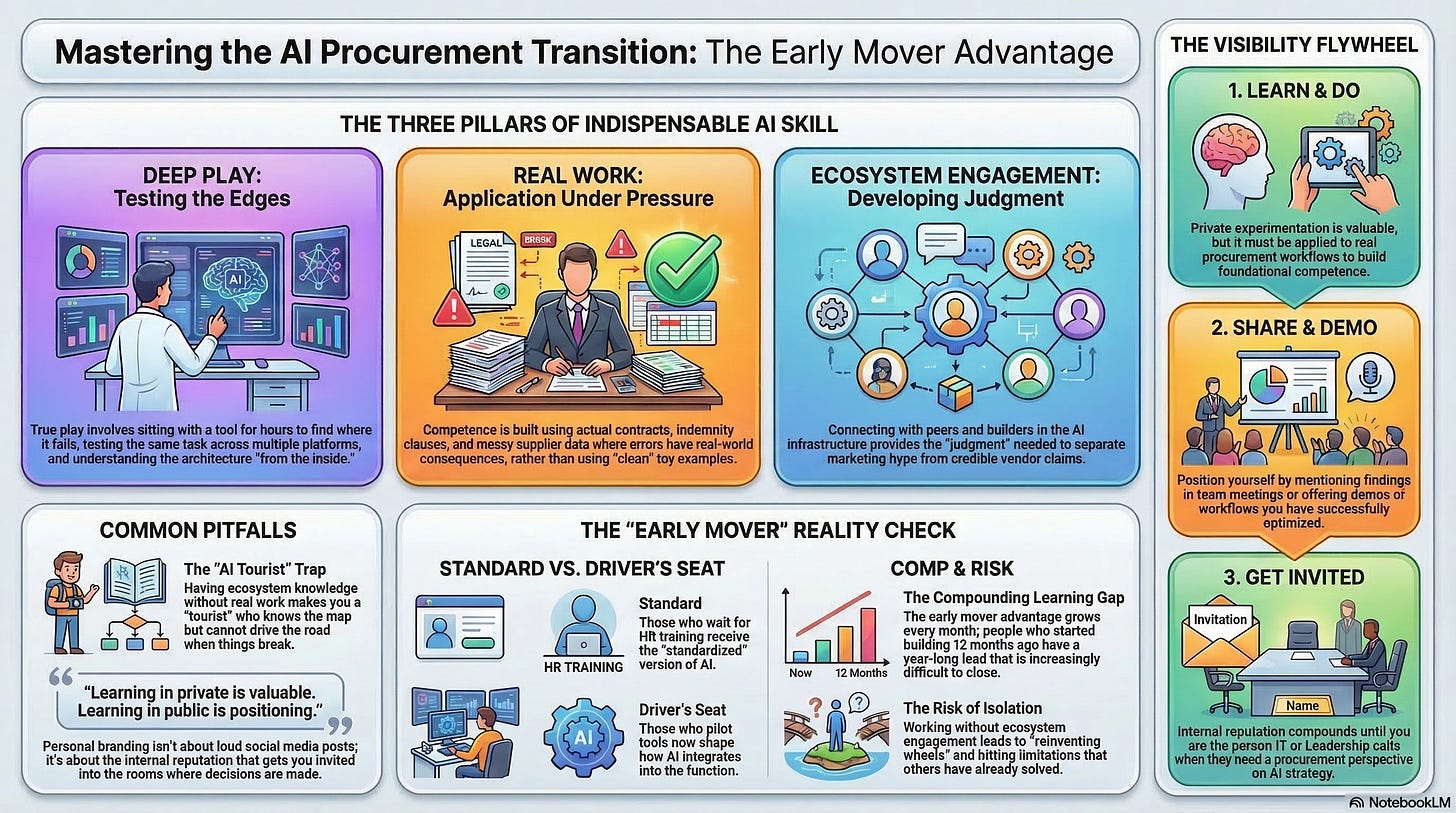

The real version is harder. It involves three things working together. Separately, each one is insufficient. Together, they create something that genuinely compounds.

Play

You need to experiment, and that means proper experimentation — not just running the demos that impress at dinner parties.

Real play looks like sitting with a tool for an evening trying to do something you’re not sure it can do. Finding the edges of what works. Getting frustrated when a prompt doesn’t do what you expected and figuring out why. Testing the same task across different tools to see where the differences actually matter.

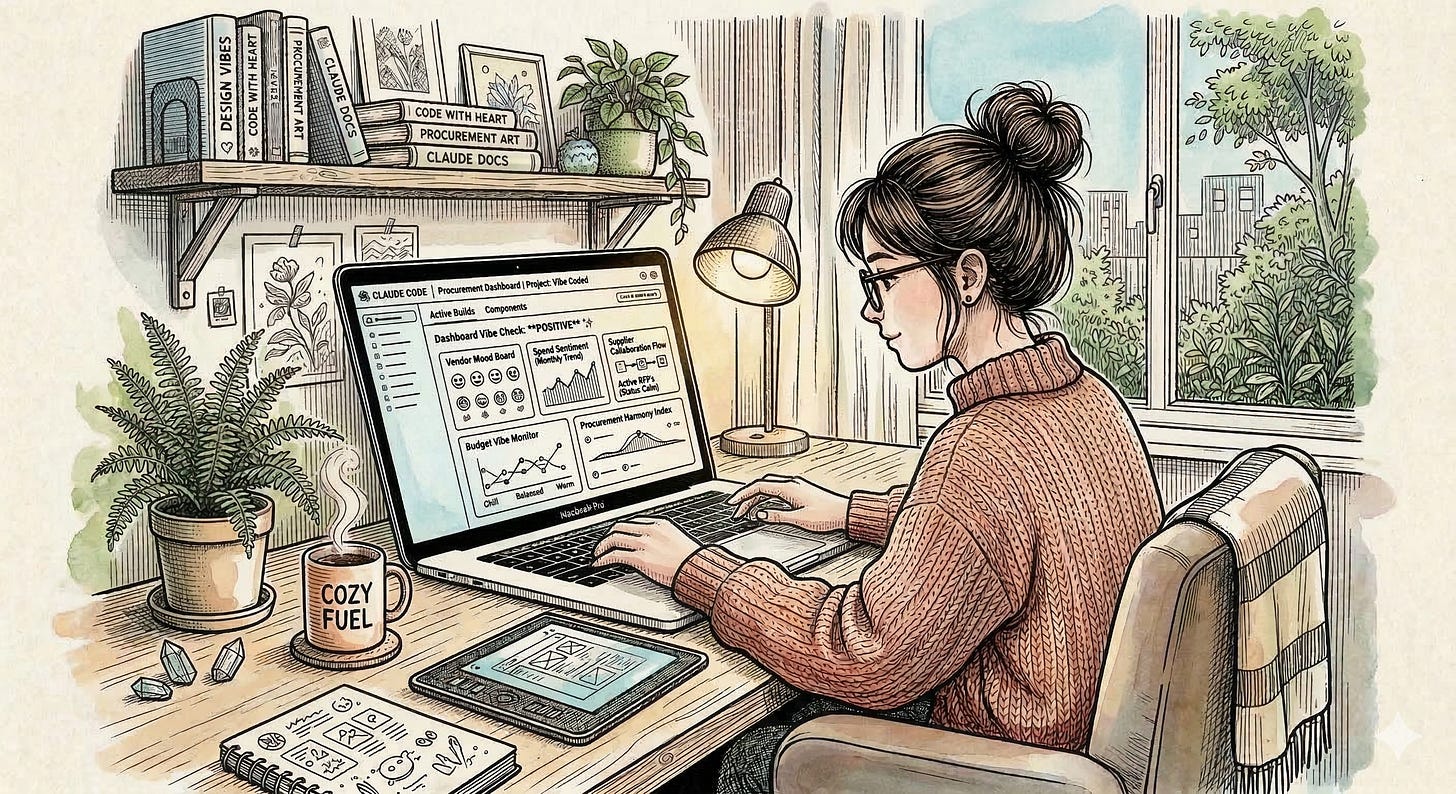

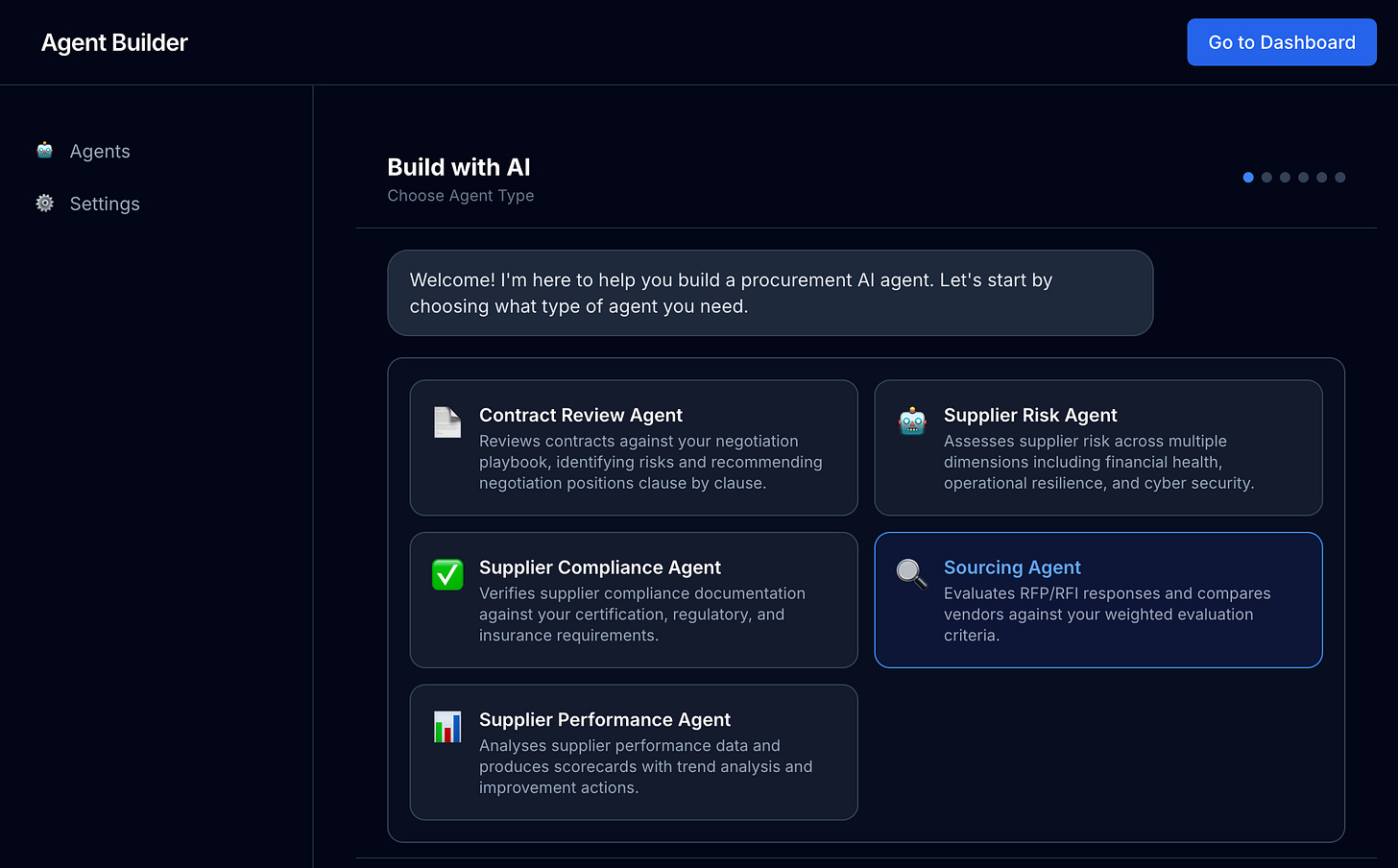

Three weeks ago, out of pure curiosity, I built a full procurement agent SaaS platform over a weekend using Claude Code — not to deploy it, not to launch it as a product. It will never see a public user. I just wanted to understand what it actually takes to architect procurement agents properly: how to structure the tools, the memory, the decision logic, the failure modes. I wanted to understand the system from the inside. That one weekend of deep play completely changed how I think about agent design. I can now map out an agent for any procurement task because I understand the architecture underneath.

Most people do a version of play once or twice, feel like they’ve got the picture, and move on. The people who develop genuine capability keep playing, because the tools change fast enough that your understanding of what’s possible needs to keep up.

Play is necessary. But it’s not sufficient on its own. If all you do is experiment in the abstract, you can demo things confidently without having any practical skill.

Real Work

The skills that matter are built by using AI on your actual work, with your actual data, under your actual constraints.

Not toy examples. Not the clean, well-formatted case study where everything goes right. Your real contracts, with the indemnity clauses that reference legislation you have to look up. Your actual supplier data, with the inconsistencies and duplicates that would take someone a week to clean manually. Your genuine stakeholder communications, where the tone matters and a wrong word creates a problem.

This is where the experimentation becomes competence. And this is where most people stop.

The agents I’ve built at Gatekeeper — across procurement, contract management, and risk — came from exactly this kind of committed application. Not experiments in a sandbox with sample data, but live agents processing real contracts, real supplier records, real compliance requirements, where errors have consequences. That’s also true of the agents I build and use for my own marketing work. Each one required confronting something that didn’t work the way I expected it to, figuring out why, and rebuilding it. That’s the repetition that builds real understanding.

Real work is harder than play because the stakes are higher. You have to trust the output enough to act on it, or explain why you caught something before it went further. You have to develop the calibration that tells you when AI is right, when it’s plausible but wrong, and when to verify before you proceed.

That calibration takes time. It takes repetition. And it cannot be shortcut.

Engagement with the Ecosystem

This one is underrated, and it’s where most procurement professionals leave a significant gap.

Knowing what tools exist isn’t enough. The people who get genuinely indispensable understand the direction of travel — what’s being built, where the real breakthroughs are happening, what the vendors are overpromising versus actually delivering.

This comes from talking to people in the ecosystem. Peer conversations with people actually deploying these tools in production. Events where people are honest about what hasn’t worked, not just what has.

Being selected for the Perplexity Fellowship — a programme that brings together go-to-market AI future leaders — put me in rooms with people building the infrastructure of what’s coming next. Those conversations changed my understanding of the landscape faster than months of individual research could have. You hear things in those rooms that don’t make it into product decks.

And here’s what the ecosystem engagement gives you that the other two can’t: judgment. The ability to separate signal from noise. To hear a vendor claim and know whether it’s credible. To understand why a tool that works brilliantly for one use case fails at a different one.

Without this, you’re solving problems in isolation. With it, you start to understand the broader system — what procurement functions will look like in two years, where the talent gaps are opening, which problems are already solved and which ones genuinely haven’t been cracked yet.

Why All Three Together

This is the part most career advice misses.

Play without real work is dabbling. You can talk about AI tools impressively. You cannot solve real problems with them under pressure.

You’ll see the false promises on LinkedIn with posts that say something like “See how I used AI to solve 80hours worth or work a week into 10 using this AI prompt - Comment “Hustle””.

It’s a lot of BS from these people.

But, we can absolutely deploy AI do have this type of impact. I’m seeing it already.

Real work without ecosystem engagement means you’re solving problems that others have already solved better. You’re not building on what’s already been figured out. You’re reinventing wheels and hitting limitations that already have workarounds.

Ecosystem engagement without real work makes you an AI tourist. You know where everything is on the map. You have never driven the roads. When something breaks, you don’t actually know how to fix it.

The combination — ongoing, iterative, all three at once — is what creates indispensability. Because most people will do one of the three. Some will do two. Very few will maintain all three, consistently, over time.

And that’s exactly why it creates separation.

The Visibility Question

None of this matters if no one knows you’re doing it.

That’s not about personal branding or LinkedIn posts about your AI journey. It’s about the internal reputation that gets you invited into rooms and onto projects.

The flywheel is simple: learn, do, share, get invited, repeat.

The sharing doesn’t have to be loud. It can be a team meeting mention: “I tried something new on the contract review last week — here’s what I learned.” It can be offering to demo something you’ve figured out. It can be becoming the person IT calls when they need a procurement view on an AI conversation.

Over time, the internal reputation compounds. People start coming to you. “You know about AI stuff — what would you use for this?” The reputation creates opportunity, and the opportunity creates more capability.

The key distinction: learning in private is valuable. Learning in public is positioning.

What Happens If You Don’t

This isn’t fear. It’s realistic.

AI tools will come to your procurement function. Workflows will change. New capabilities will emerge. This happens whether you participate or not.

The question is whether you shape how it happens in your organisation, or you adapt to the version someone else designed.

The early movers — the ones running pilots now, the ones sitting in IT conversations, the ones who’ve spent evenings trying things on real work — will have twelve, eighteen, twenty-four months of compounded learning by the time this is fully mainstream.

That gap is real. And it grows every month.

The Honest Version

AI won’t make you indispensable on its own. The tool is available to everyone.

What creates separation is the combination of things most people won’t do together: the real experimentation, the actual application to real work, the ongoing engagement with where the field is going.

That’s not an afternoon commitment. It’s not a three-week online course. It’s a genuine investment of time and attention over months, built into how you work.

The people who will look back on this period as the moment everything changed for their careers are the ones who treated it seriously enough to do the work.

The window is open. What you do with it is up to you.